|

Spark����ϣ����е��ݽ��α�����Ϊshuffle����Ӱ�����ܵĵط������������κΡ�֮ǰȥ�ٶ�����hadoop��ʱ��Ҳ���ʵ���������⣬ֱ�ӻش��˲�֪������ƪ������Ҫ���������漸����������չ��shuffle���̵Ļ��֣�shuffle���м�����δ洢��shuffle�����������ȡ������

Shuffle���̵Ļ���

Spark�IJ���ģ���ǻ���RDD�ģ�������RDD��reduceByKey��groupByKey�����ƵIJ�����ʱ����Ҫ��shuffle�ˡ����ó�reduceByKey���������

def reduceByKey(func: (V, V) => V, numPartitions: Int): RDD[(K, V)] = {

reduceByKey(new HashPartitioner(numPartitions), func)

} |

reduceByKey��ʱ�����ǿ����ֶ��趨reduce�ĸ����������ָ���Ļ����Ϳ��ܲ��ܿ����ˡ�

def defaultPartitioner(rdd: RDD[_], others: RDD[_]*): Partitioner = {

val bySize = (Seq(rdd) ++ others).sortBy(_.partitions.size).reverse

for (r <- bySize if r.partitioner.isDefined) {

return r.partitioner.get

}

if (rdd.context.conf.contains("spark.default.parallelism")) {

new HashPartitioner(rdd.context.defaultParallelism)

} else {

new HashPartitioner(bySize.head.partitions.size)

}

} |

�����ָ��reduce�����Ļ����Ͱ�Ĭ�ϵ��ߣ�

1������Զ����˷�������partitioner�Ļ����Ͱ���ķ����������ߡ�

2�����û�ж��壬��ô���������spark.default.parallelism����ʹ�ù�ϣ�ķ�����ʽ��reduce�����������õ����ֵ��

3��������Ҳû���ã��ǾͰ����������ݵķ�Ƭ���������趨�������hadoop���������ݵĻ�������Ͷ��ˡ�������ҿ�ҪС�İ���

�趨��֮���������������飬Ҳ����֮ǰ����3��RDDת����

//map���Ȱ���key�ϲ�һ��

val combined = self.mapPartitionsWithContext((context, iter) => {

aggregator.combineValuesByKey(iter, context)

}, preservesPartitioning = true)

//reduceץȡ����

val partitioned = new ShuffledRDD[K, C, (K, C)](combined, partitioner).setSerializer(serializer)

//�ϲ����ݣ�ִ��reduce����

partitioned.mapPartitionsWithContext((context, iter) => {

new InterruptibleIterator(context, aggregator.combineCombinersByKey(iter, context))

}, preservesPartitioning = true) |

1���ڵ�һ��MapPartitionsRDD��������һ��map�˵ľۺϲ�����

2��ShuffledRDD��Ҫ���������ץȡ���ݵĹ�����

3���ڶ���MapPartitionsRDD��ץȡ�����������ٴν��оۺϲ�����

4������1�Ͳ���3�����漰��spill�Ĺ��̡�

��ô���ľۺϲ�������ȥ��RDD���¡�

Shuffle���м�����δ洢

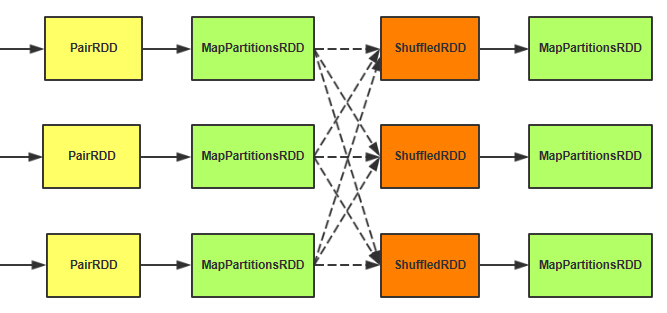

��ҵ�ύ��ʱ��DAGScheduler���Shuffle�Ĺ����зֳ�map��reduce����Stage��֮ǰһֱ���ҽ���shuffleǰ��shuffle��������зֵ�λ������ͼ�����ߴ���

map�˵��������Ϊһ��ShuffleMapTask�ύ�������TaskRunner�������������runTask������

override def runTask(context: TaskContext): MapStatus = {

val numOutputSplits = dep.partitioner.numPartitions

metrics = Some(context.taskMetrics)

val blockManager = SparkEnv.get.blockManager

val shuffleBlockManager = blockManager.shuffleBlockManager

var shuffle: ShuffleWriterGroup = null

var success = false

try {

// serializerΪ�յ��������Ĭ�ϵ�JavaSerializer��Ҳ����ͨ��spark.serializer�����óɱ��

val ser = Serializer.getSerializer(dep.serializer)

// ʵ����Writer��Writer������=numOutputSplits=ǰ������˵���Ǹ�reduce������

shuffle = shuffleBlockManager.forMapTask(dep.shuffleId,

partitionId, numOutputSplits, ser)

// ����rdd��Ԫ�أ�����key������������ڵ�bucketId��Ȼ��ͨ��bucketId�ҵ���Ӧ��Writerд��

for (elem <- rdd.iterator(split, context))

{

val pair = elem.asInstanceOf[Product2[Any, Any]]

val bucketId = dep.partitioner.getPartition(pair._1)

shuffle.writers(bucketId).write(pair)

}

// �ύд�����. ����ÿ��bucket block�Ĵ�С

var totalBytes = 0L

var totalTime = 0L

val compressedSizes: Array[Byte] = shuffle.writers.map

{ writer: BlockObjectWriter =>

writer.commit()

writer.close()

val size = writer.fileSegment().length

totalBytes += size

totalTime += writer.timeWriting()

MapOutputTracker.compressSize(size)

}

// ���� shuffle ��ز���.

val shuffleMetrics = new ShuffleWriteMetrics

shuffleMetrics.shuffleBytesWritten = totalBytes

shuffleMetrics.shuffleWriteTime = totalTime

metrics.get.shuffleWriteMetrics = Some(shuffleMetrics)

success = true

new MapStatus(blockManager.blockManagerId, compressedSizes)

} catch { case e: Exception =>

// �����ˣ�ȡ��֮ǰ�IJ������ر�writer

if (shuffle != null && shuffle.writers

!= null) {

for (writer <- shuffle.writers) {

writer.revertPartialWrites()

writer.close()

}

}

throw e

} finally {

// �ر�writer

if (shuffle != null && shuffle.writers

!= null) {

try {

shuffle.releaseWriters(success)

} catch {

case e: Exception => logError("Failed

to release shuffle writers", e)

}

}

// ִ��ע��Ļص�������һ��������������

context.executeOnCompleteCallbacks()

}

} |

����ÿһ����¼��ͨ������key��ȷ������bucketId����ͨ�����bucket��writerд�����ݡ�

�������ǿ���ShuffleBlockManager��forMapTask�����ɡ�

��val cpier = new ShuffleCopier(blockManager.conf)

cpier.getBlocks(cmId, req.blocks, putResult) |

������������netty�Ŀͻ��˵��õķ����ˣ��Ҷ�������˽⡣�ڷ���˵Ĵ�������DiskBlockManager�ڲ�������һ��ShuffleSender�ķ������յ�ҵ����������FileServerHandler��

����ͨ��getBlockLocation����һ��FileSegment��������δ�����ShuffleBlockManager��getBlockLocation������

def forMapTask(shuffleId: Int, mapId: Int, numBuckets: Int, serializer: Serializer) = {

new ShuffleWriterGroup {

shuffleStates.putIfAbsent(shuffleId, new ShuffleState(numBuckets))

private val shuffleState = shuffleStates(shuffleId)

private var fileGroup: ShuffleFileGroup = null

val writers: Array[BlockObjectWriter]

= if (consolidateShuffleFiles) {

fileGroup = getUnusedFileGroup()

Array.tabulate[BlockObjectWriter](numBuckets)

{ bucketId =>

val blockId = ShuffleBlockId(shuffleId, mapId,

bucketId)

������������// �����е��ļ�����ѡ�ļ���һ��bucketһ���ļ�����Ҫ���͵�ͬһ��reduce������д�뵽ͬһ���ļ�

blockManager.getDiskWriter(blockId, fileGroup(bucketId),

serializer, bufferSize)

}

} else {

Array.tabulate[BlockObjectWriter](numBuckets)

{ bucketId =>

// ����blockId�������ļ����ļ���Ϊmap��*reduce��

val blockId = ShuffleBlockId(shuffleId, mapId,

bucketId)

val blockFile = blockManager.diskBlockManager.getFile(blockId)

if (blockFile.exists) {

if (blockFile.delete()) {

logInfo(s"Removed existing shuffle file $blockFile")

} else {

logWarning(s"Failed to remove existing shuffle

file $blockFile")

}

}

blockManager.getDiskWriter(blockId, blockFile,

serializer, bufferSize)

}

} |

1��map���м�����д�뵽����Ӳ�̵ģ��������ڴ档

2��Ĭ����һ��Executor���м����ļ���M*R��M=map������R=reduce����������������spark.shuffle.consolidateFilesΪtrue֮����R���ļ�������bucketId��Ҫ�ֵ�ͬһ��reduce�Ľ��д�뵽һ���ļ��С�

3��consolidateFiles���õ���һ��reduceһ���ļ���������¼��ÿ��map��д����ʼλ�ã����Բ��ҵ�ʱ����ͨ��reduceId���ҵ��ĸ��ļ�����ͨ��mapId�����������е���ʼλ��offset������length=��mapId

+ 1��.offset -��mapId��.offset�������Ϳ���ȷ��һ��FileSegment(file,

offset, length)��

4��Finally���洢����֮�� ������һ��new MapStatus(blockManager.blockManagerId,

compressedSizes)����blockManagerId��block�Ĵ�С��һ�ء�

�����뷨��shuffle����hadoop�Ļ��Ʋ��tez��������������spark���ٶ��أ�������������Ŀ�Դ��ɣ�

Shuffle�����������ȡ����

ShuffleMapTask����֮������ߵ�DAGScheduler��handleTaskCompletion�������У������м�Ĺ��̣��뿴��ͼ����ҵ�������ڡ�����

case smt: ShuffleMapTask =>

val status = event.result.asInstanceOf[MapStatus]

val execId = status.location.executorId

if (failedEpoch.contains(execId) && smt.epoch <= failedEpoch(execId)) {

logInfo("Ignoring possibly bogus ShuffleMapTask completion from " + execId)

} else {

stage.addOutputLoc(smt.partitionId, status)

}

if (runningStages.contains(stage) && pendingTasks(stage).isEmpty) {

markStageAsFinished(stage)

if (stage.shuffleDep.isDefined) {

// ���map���̲Ż������������reduce����None

mapOutputTracker.registerMapOutputs(

����stage.shuffleDep.get.shuffleId,

stage.outputLocs.map(list => if (list.isEmpty) null else list.head).toArray,

changeEpoch = true)

}

clearCacheLocs()

if (stage.outputLocs.exists(_ == Nil)) {

// һЩ����ʧ���ˣ���Ҫ�����ύstage

submitStage(stage)

} else {

// �ύ��һ������

������}

} |

1���ѽ�����ӵ�Stage��outputLocs��������ǰ������ݵķ���Id���洢ӳ���ϵ��partitionId->MapStaus��

2��stage����֮��ͨ��mapOutputTracker��registerMapOutputs�������Ѵ˴�shuffle�Ľ��outputLocs��¼��mapOutputTracker���档

���stage����֮�͵�ShuffleRDD�����ˣ����ǿ�һ������compute������

SparkEnv.get.shuffleFetcher.fetch[P](shuffledId, split.index, context, ser) |

����ͨ��ShuffleFetch��fetch������ץȡ�ģ�����ʵ����BlockStoreShuffleFetcher���档

override def fetch[T](

shuffleId: Int,

reduceId: Int,

context: TaskContext,

serializer: Serializer)

: Iterator[T] =

{

val blockManager = SparkEnv.get.blockManager

val startTime = System.currentTimeMillis

���� // mapOutputTrackerҲ��Master��Worker��

Worker��Master�����ȡreduce��ص�MapStatus����Ҫ�ǣ�BlockManagerId��size��

val statuses = SparkEnv.get.mapOutputTracker.getServerStatuses(shuffleId, reduceId)

// һ��BlockManagerId��Ӧ����ļ��Ĵ�С

val splitsByAddress = new HashMap[BlockManagerId, ArrayBuffer[(Int, Long)]]

for (((address, size), index) <- statuses.zipWithIndex) {

splitsByAddress.getOrElseUpdate(address, ArrayBuffer()) += ((index, size))

}

// ����BlockManagerId �� BlockId��ӳ���ϵ���벻��ShffleBlockId��mapId����Ȼ��1,2,3,4������...

val blocksByAddress: Seq[(BlockManagerId, Seq[(BlockId, Long)])] = splitsByAddress.toSeq.map {

case (address, splits) =>

(address, splits.map(s => (ShuffleBlockId(shuffleId, s._1, reduceId), s._2)))

}

// ��ΪupdateBlock��ʵ���Ǽ��麯����ÿ��Block����Ӧ��һ��Iterator�ӿڣ�

����ýӿ�Ϊ�գ���Ӧ�ñ���

def unpackBlock(blockPair: (BlockId, Option[Iterator[Any]])) : Iterator[T] = {

val blockId = blockPair._1

val blockOption = blockPair._2

blockOption match {

case Some(block) => {

block.asInstanceOf[Iterator[T]]

}

case None => {

blockId match {

case ShuffleBlockId(shufId, mapId, _) =>

val address = statuses(mapId.toInt)._1

throw new FetchFailedException(address, shufId.toInt, mapId.toInt, reduceId, null)

case _ =>

throw new SparkException("Failed to get block " + blockId + ", which is not a shuffle block")

}

}

}

}

// ��blockManager��ȡreduce����Ҫ��ȫ��block��������У�麯��

val blockFetcherItr = blockManager.getMultiple(blocksByAddress, serializer)

val itr = blockFetcherItr.flatMap(unpackBlock)

����val completionIter = CompletionIterator[T, Iterator[T]](itr, {

// CompelteIterator��������֮��ִ�������ⲿ�ִ��룬�ύ����¼�ĸ��ֲ���

val shuffleMetrics = new ShuffleReadMetrics

shuffleMetrics.shuffleFinishTime = System.currentTimeMillis

shuffleMetrics.fetchWaitTime = blockFetcherItr.fetchWaitTime

shuffleMetrics.remoteBytesRead = blockFetcherItr.remoteBytesRead

shuffleMetrics.totalBlocksFetched = blockFetcherItr.totalBlocks

shuffleMetrics.localBlocksFetched = blockFetcherItr.numLocalBlocks

shuffleMetrics.remoteBlocksFetched = blockFetcherItr.numRemoteBlocks

context.taskMetrics.shuffleReadMetrics = Some(shuffleMetrics)

})

new InterruptibleIterator[T](context,

completionIter)

}

} |

1��MapOutputTrackerWorker��MapOutputTrackerMaster��ȡshuffle��ص�map�����Ϣ��

2����map�����Ϣ�����BlockManagerId --> Array(BlockId,

size)��ӳ���ϵ��

3��ͨ��BlockManager��getMultiple������ȡblock��

4������һ���ɱ�����Iterator�ӿڣ���������صļ�ز�����

���Ǽ�����getMultiple������

def getMultiple(

blocksByAddress: Seq[(BlockManagerId, Seq[(BlockId, Long)])],

serializer: Serializer): BlockFetcherIterator = {

val iter =

if (conf.getBoolean("spark.shuffle.use.netty", false)) {

new BlockFetcherIterator.NettyBlockFetcherIterator(this, blocksByAddress, serializer)

} else {

new BlockFetcherIterator.BasicBlockFetcherIterator(this, blocksByAddress, serializer)

}

iter.initialize()

iter

} |

����������������ֱ���netty�ĺ�Basic�ģ�Basic�ľͲ����ˣ�����ͨ��ConnectionManagerȥָ����BlockManager�����ȡ���ݣ���һ�¸պ�˵�ˡ�

���ǽ�һ��Netty�İɣ��������Ҫ���õIJ������õģ���֪�����ܻ���һЩ�أ�

��NettyBlockFetcherIterator��initialize�������ٿ�BasicBlockFetcherIterator��initialize����������Basic�IJ���ͬʱץȡ����48Mb�����ݡ�

override def initialize() {

// �ֿ����������Զ��������Զ�̵�FetchRequest

val remoteRequests = splitLocalRemoteBlocks()

// ץȡ˳�����

for (request <- Utils.randomize(remoteRequests)) {

fetchRequestsSync.put(request)

}

// Ĭ���ǿ�6���߳�ȥ����ץȡ

copiers = startCopiers(conf.getInt("spark.shuffle.copier.threads", 6))// ��ȡ���ص�block

getLocalBlocks()

} |

��NettyBlockFetcherIterator��sendRequest�������棬��������ͨ��ShuffleCopier�����µġ�

val cpier = new ShuffleCopier(blockManager.conf)

cpier.getBlocks(cmId, req.blocks, putResult) |

������������netty�Ŀͻ��˵��õķ����ˣ��Ҷ�������˽⡣�ڷ���˵Ĵ�������DiskBlockManager�ڲ�������һ��ShuffleSender�ķ������յ�ҵ����������FileServerHandler��

����ͨ��getBlockLocation����һ��FileSegment��������δ�����ShuffleBlockManager��getBlockLocation������

def getBlockLocation(id: ShuffleBlockId): FileSegment = {

// Search all file groups associated with this shuffle.

val shuffleState = shuffleStates(id.shuffleId)

for (fileGroup <- shuffleState.allFileGroups) {

val segment = fileGroup.getFileSegmentFor(id.mapId, id.reduceId)

if (segment.isDefined) { return segment.get }

}

throw new IllegalStateException("Failed to find shuffle block: " + id)

} |

��ͨ��shuffleId�ҵ�ShuffleState����ͨ��reduceId�ҵ��ļ������ͨ��mapIdȷ�������ļ���Ƭ��λ�á����������и������ˣ����������consolidateFiles��һ��reduce���������ݶ���һ���ļ���Dz��ǾͿ��������ļ�һ���أ�������ͨ��N��map����ζ�ȡ�����Ǻ���һ�η���һ�����ļ�����ʧ�ܣ���Ͳ��ö�֪�ˡ�

�������������̾ͽ����ˡ����Կ��ó���Shuffle��黹������һЩ�Ż��ģ�������Щ������û�����ã�����Ҫ�����ѿ����Լ�����һ������Ч����

|