|

ǰ��

Hadoop��MapReduce������һ�����ӵı�̻�������������Ҫ�����ܵؼ���MapReduce��Ŀ�Ĺ��̡�Maven��һ���ܲ������Զ�����Ŀ�������ߣ�ͨ��Maven���������ǴӸ��ӵĻ��������н��ѳ������Ӷ������������̡����ԣ�дMapReduce֮ǰ���������Ȼ���ʱ��ѵ�ĥ�죡����Ȼ������Maven����������ѡ��Gradle(�Ƽ�),

Ivy��.

���潫���н��ܼ�ƪMapReduce���������£���Ҫ�����ڱ�����Maven�Ĺ�����MapReduce������

1. Maven����

Apache Maven����һ��Java����Ŀ�������Զ��������ߣ���Apache������������ṩ��������Ŀ����ģ�ͣ���д��POM�����Maven����һ��������ϢƬ���ܹ���һ����Ŀ�Ĺ�����������ĵ��Ȳ��衣����Jakarta��Ŀ������Ŀ����Ϊ����Apache��Ŀ��

maven�Ŀ����������ǿ�����վ��ָ����maven��Ŀ����Ҫʹ����Ŀ�Ĺ����������ף����ѱ��롢��������ԡ������ȿ��������еIJ�ͬ�����л��Ĵ�����������������һ�µġ�����������Ŀ��Ϣ��ʹ����Ŀ��Ա�ܹ���ʱ�صõ�������maven��Ч��֧���˲������ȡ��������ɣ������˹�����ͨ����ʱ��������������������˵Ant�ĸ����ǽ����ڡ������Cճ�����Ļ����ϵģ���ôMavenͨ������Ļ���ʵ������Ŀ���������������á�

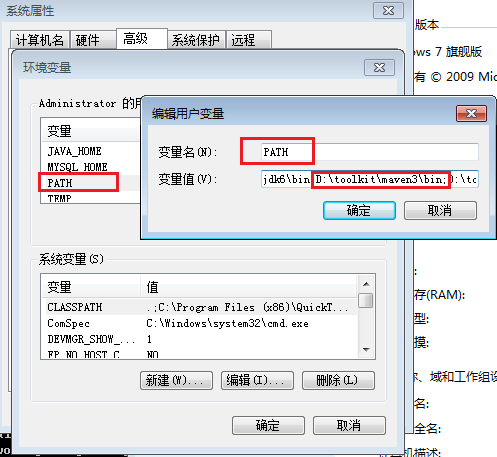

2. Maven��װ(win)

����Maven��http://maven.apache.org/download.cgi

�������µ�xxx-bin.zip�ļ�����win�Ͻ�ѹ�� D:\toolkit\maven3

����maven/binĿ¼�����ڻ�������PATH��

Ȼ������������mvn�����ǻῴ��mvn���������Ч��

~ C:\Users\Administrator>mvn

[INFO] Scanning for projects...

[INFO] ------------------------------------------------------------------------

[INFO] BUILD FAILURE

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 0.086s

[INFO] Finished at: Mon Sep 30 18:26:58 CST 2013

[INFO] Final Memory: 2M/179M

[INFO] ------------------------------------------------------------------------

[ERROR] No goals have been specified for this build. You must specify a valid lifecycle phase or a goal in the format : or :[:]:.

Available lifecycle phases are: validate, initialize, generate-sources, process-sources,

generate-resources, process-resources, compile, process-class

es, generate-test-sources, process-test-sources, generate-test-resources, process-test-resources, test-compile,

process-test-classes, test, prepare-package, package, pre-integration-test, integration-test, post-integration-test,

verify, install, deploy, pre-clean, clean, post-clean, pre-site, site, post-site, site-deploy. -> [Help 1]

[ERROR]

[ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch.

[ERROR] Re-run Maven using the -X switch to enable full debug logging.

[ERROR]

[ERROR] For more information about the errors and possible solutions, please read the following articles:

[ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/NoGoalSpecifiedException |

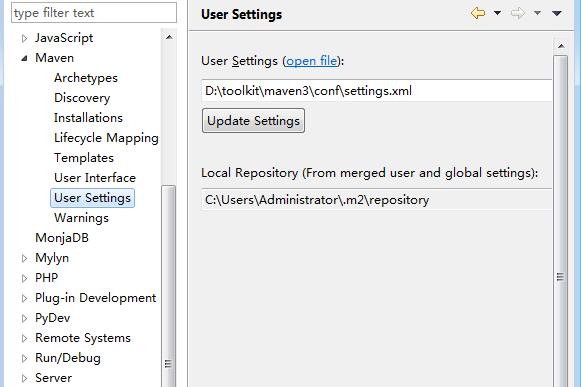

��װEclipse��Maven�����Maven Integration for Eclipse

Maven��Eclipse�������

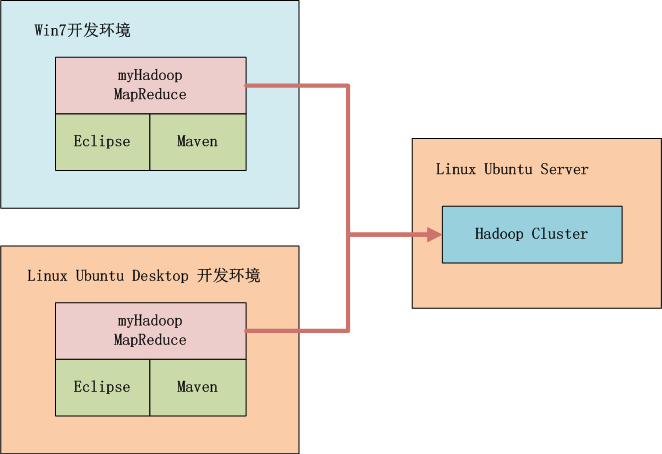

3. Hadoop������������

����ͼ��ʾ�����ǿ���ѡ����win�п�����Ҳ������linux�п�������������Hadoop����Զ�̵���Hadoop������Ĺ��߶���Maven��Eclipse��

Hadoop��Ⱥϵͳ������

Linux: Ubuntu 12.04.2 LTS 64bit Server

Java: 1.6.0_29

Hadoop: hadoop-1.0.3�����ڵ㣬IP:192.168.1.210

4. ��Maven����Hadoop����

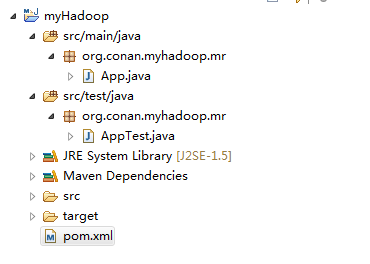

1. ��Maven����һ��������Java��Ŀ

2. ������Ŀ��eclipse

3. ����hadoop��������pom.xml

4. ��������

5. ��Hadoop��Ⱥ��������hadoop�����ļ�

6. ����host

1). ��Maven����һ��������Java��Ŀ

~ D:\workspace\java>mvn archetype:generate -DarchetypeGroupId=

org.apache.maven.archetypes -DgroupId=org.conan.myhadoop.mr

-DartifactId=myHadoop -DpackageName=org.conan.myhadoop.mr -Dversion=

1.0-SNAPSHOT -DinteractiveMode=false

[INFO] Scanning for projects...

[INFO]

[INFO] ---------------------------------------------

[INFO] Building Maven Stub Project (No POM) 1

[INFO] -----------------------------------------------

[INFO]

[INFO] >>> maven-archetype-plugin:2.2:generate (default-cli) @ standalone-pom >>>

[INFO]

[INFO] <<< maven-archetype-plugin:2.2:generate (default-cli) @ standalone-pom <<<

[INFO]

[INFO] --- maven-archetype-plugin:2.2:generate (default-cli) @ standalone-pom ---

[INFO] Generating project in Batch mode

[INFO] No archetype defined. Using maven-archetype-quickstart

(org.apache.maven.archetypes:maven-archetype-quickstart:1.0)

Downloading: http://repo.maven.apache.org/maven2/org/apache/maven/archetypes/

maven-archetype-quickstart/1.0/maven-archet

ype-quickstart-1.0.jar

Downloaded: http://repo.maven.apache.org/maven2/org/apache/maven/archetypes/

maven-archetype-quickstart/1.0/maven-archety

pe-quickstart-1.0.jar (5 KB at 4.3 KB/sec)

Downloading: http://repo.maven.apache.org/maven2/org/apache/maven/archetypes/

maven-archetype-quickstart/1.0/maven-archet

ype-quickstart-1.0.pom

Downloaded: http://repo.maven.apache.org/maven2/org/apache/maven/archetypes

/maven-archetype-quickstart/1.0/maven-archety

pe-quickstart-1.0.pom (703 B at 1.6 KB/sec)

[INFO] --------------------------------------------------

[INFO] Using following parameters for creating project from Old (1.x) Archetype: maven-archetype-quickstart:1.0

[INFO] ----------------------------------------------------

[INFO] Parameter: groupId, Value: org.conan.myhadoop.mr

[INFO] Parameter: packageName, Value: org.conan.myhadoop.mr

[INFO] Parameter: package, Value: org.conan.myhadoop.mr

[INFO] Parameter: artifactId, Value: myHadoop

[INFO] Parameter: basedir, Value: D:\workspace\java

[INFO] Parameter: version, Value: 1.0-SNAPSHOT

[INFO] project created from Old (1.x) Archetype in dir: D:\workspace\java\myHadoop

[INFO] ----------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ----------------------------------------------------

[INFO] Total time: 8.896s

[INFO] Finished at: Sun Sep 29 20:57:07 CST 2013

[INFO] Final Memory: 9M/179M

[INFO] ---------------------------------------------------- |

������Ŀ��ִ��mvn����

~ D:\workspace\java>cd myHadoop

~ D:\workspace\java\myHadoop>mvn clean install

[INFO]

[INFO] --- maven-jar-plugin:2.3.2:jar (default-jar) @ myHadoop ---

[INFO] Building jar: D:\workspace\java\myHadoop\target\myHadoop-1.0-SNAPSHOT.jar

[INFO]

[INFO] --- maven-install-plugin:2.3.1:install (default-install) @ myHadoop ---

[INFO] Installing D:\workspace\java\myHadoop\target\myHadoop-1.0-SNAPSHOT.jar to

C:\Users\Administrator\.m2\repository\o

rg\conan\myhadoop\mr\myHadoop\1.0-SNAPSHOT\myHadoop-1.0-SNAPSHOT.jar

[INFO] Installing D:\workspace\java\myHadoop\pom.xml to

C:\Users\Administrator\.m2\repository\org\conan\myhadoop\mr\myHa

doop\1.0-SNAPSHOT\myHadoop-1.0-SNAPSHOT.pom

[INFO] -------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] -------------------------------------------------------

[INFO] Total time: 4.348s

[INFO] Finished at: Sun Sep 29 20:58:43 CST 2013

[INFO] Final Memory: 11M/179M

[INFO] ------------------------------------------------------- |

2). ������Ŀ��eclipse

���Ǵ�������һ��������maven��Ŀ��Ȼ���뵽eclipse�С� ������������Ѱ�װ����Maven�IJ����

3). ����hadoop����

������ʹ��hadoop-1.0.3�汾�����ļ���pom.xml

~ vi pom.xml

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi=

"http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0

http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.conan.myhadoop.mr</groupId>

<artifactId>myHadoop</artifactId>

<packaging>jar</packaging>

<version>1.0-SNAPSHOT</version>

<name>myHadoop</name>

<url>http://maven.apache.org</url>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-core</artifactId>

<version>1.0.3</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.4</version>

<scope>test</scope>

</dependency>

</dependencies>

</project> |

4). ��������

����������

��eclipse��ˢ����Ŀ��

��Ŀ�����������Զ����صĿ�·�����档

5). ��Hadoop��Ⱥ��������hadoop�����ļ�

core-site.xml

hdfs-site.xml

mapred-site.xml

|

�鿴core-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/conan/hadoop/tmp</value>

</property>

<property>

<name>io.sort.mb</name>

<value>256</value>

</property>

</configuration> |

�鿴hdfs-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.data.dir</name>

<value>/home/conan/hadoop/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

</configuration> |

�鿴mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>mapred.job.tracker</name>

<value>hdfs://master:9001</value>

</property>

</configuration> |

������src/main/resources/hadoopĿ¼����

ɾ��ԭ�Զ����ɵ��ļ���App.java��AppTest.java

6).���ñ���host������master������ָ��

~ vi c:/Windows/System32/drivers/etc/hosts

192.168.1.210 master |

5. MapReduce����

��дһ����MapReduce����ʵ��wordcount���ܡ�

��һ��Java�ļ���WordCount.java

package org.conan.myhadoop.mr;

import java.io.IOException;

import java.util.Iterator;

import java.util.StringTokenizer;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileInputFormat;

import org.apache.hadoop.mapred.FileOutputFormat;

import org.apache.hadoop.mapred.JobClient;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.MapReduceBase;

import org.apache.hadoop.mapred.Mapper;

import org.apache.hadoop.mapred.OutputCollector;

import org.apache.hadoop.mapred.Reducer;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

import org.apache.hadoop.mapred.TextOutputFormat;

public class WordCount {

public static class WordCountMapper extends

MapReduceBase implements Mapper<Object, Text,

Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

@Override

public void map(Object key, Text value, OutputCollector<Text,

IntWritable> output, Reporter reporter) throws

IOException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

output.collect(word, one);

}

}

}

public static class WordCountReducer extends

MapReduceBase implements Reducer<Text, IntWritable,

Text, IntWritable> {

private IntWritable result = new IntWritable();

@Override

public void reduce(Text key, Iterator values,

OutputCollector<Text, IntWritable> output,

Reporter reporter) throws IOException {

int sum = 0;

while (values.hasNext()) {

sum += values.next().get();

}

result.set(sum);

output.collect(key, result);

}

}

public static void main(String[] args) throws

Exception {

String input = "hdfs://192.168.1.210:9000/user/hdfs/o_t_account";

String output = "hdfs://192.168.1.210:9000/user/hdfs/o_t_account/result";

JobConf conf = new JobConf(WordCount.class);

conf.setJobName("WordCount");

conf.addResource("classpath:/hadoop/core-site.xml");

conf.addResource("classpath:/hadoop/hdfs-site.xml");

conf.addResource("classpath:/hadoop/mapred-site.xml");

conf.setOutputKeyClass(Text.class);

conf.setOutputValueClass(IntWritable.class);

conf.setMapperClass(WordCountMapper.class);

conf.setCombinerClass(WordCountReducer.class);

conf.setReducerClass(WordCountReducer.class);

conf.setInputFormat(TextInputFormat.class);

conf.setOutputFormat(TextOutputFormat.class);

FileInputFormat.setInputPaths(conf, new Path(input));

FileOutputFormat.setOutputPath(conf, new Path(output));

JobClient.runJob(conf);

System.exit(0);

}

} |

����Java APP.

����̨����

2013-9-30 19:25:02 org.apache.hadoop.util.NativeCodeLoader

����: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

2013-9-30 19:25:02 org.apache.hadoop.security.UserGroupInformation doAs

����: PriviledgedActionException as:Administrator cause:java.io.IOException:

Failed to set permissions of path: \tmp\hadoop-Administrator\mapred\staging\Administrator1702422322\.staging to 0700

Exception in thread "main" java.io.IOException: Failed to set permissions of path:

\tmp\hadoop-Administrator\mapred\staging\Administrator1702422322\.staging to 0700

at org.apache.hadoop.fs.FileUtil.checkReturnValue(FileUtil.java:689)

at org.apache.hadoop.fs.FileUtil.setPermission(FileUtil.java:662)

at org.apache.hadoop.fs.RawLocalFileSystem.setPermission(RawLocalFileSystem.java:509)

at org.apache.hadoop.fs.RawLocalFileSystem.mkdirs(RawLocalFileSystem.java:344)

at org.apache.hadoop.fs.FilterFileSystem.mkdirs(FilterFileSystem.java:189)

at org.apache.hadoop.mapreduce.JobSubmissionFiles.getStagingDir(JobSubmissionFiles.java:116)

at org.apache.hadoop.mapred.JobClient$2.run(JobClient.java:856)

at org.apache.hadoop.mapred.JobClient$2.run(JobClient.java:850)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:396)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1121)

at org.apache.hadoop.mapred.JobClient.submitJobInternal(JobClient.java:850)

at org.apache.hadoop.mapred.JobClient.submitJob(JobClient.java:824)

at org.apache.hadoop.mapred.JobClient.runJob(JobClient.java:1261)

at org.conan.myhadoop.mr.WordCount.main(WordCount.java:78) |

���������win�п������еĴ����ļ�Ȩ�����⣬��Linux�¿����������С�

��������ǣ���/hadoop-1.0.3/src/core/org/apache/hadoop/fs/FileUtil.java�ļ�

688-692��ע�ͣ�Ȼ�����±���Դ���룬���´�һ��hadoop.jar�İ���

685 private static void checkReturnValue(boolean rv, File p,

686 FsPermission permission

687 ) throws IOException {

688 /*if (!rv) {

689 throw new IOException("Failed to set permissions of path: " + p +

690 " to " +

691 String.format("%04o", permission.toShort()));

692 }*/

693 } |

�������Լ�����һ��hadoop-core-1.0.3.jar�����ŵ���lib���档

���ǻ�Ҫ�滻maven�е�hadoop��⡣

~ cp lib/hadoop-core-1.0.3.jar C:\Users\Administrator

\.m2\repository\org\apache\hadoop\hadoop-core\1.0.3\hadoop-core-1.0.3.jar |

�ٴ�����Java APP������̨�����

2013-9-30 19:50:49 org.apache.hadoop.util.NativeCodeLoader

����: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

2013-9-30 19:50:49 org.apache.hadoop.mapred.JobClient copyAndConfigureFiles

����: Use GenericOptionsParser for parsing the arguments. Applications should implement Tool for the same.

2013-9-30 19:50:49 org.apache.hadoop.mapred.JobClient copyAndConfigureFiles

����: No job jar file set. User classes may not be found. See JobConf(Class) or JobConf#setJar(String).

2013-9-30 19:50:49 org.apache.hadoop.io.compress.snappy.LoadSnappy

����: Snappy native library not loaded

2013-9-30 19:50:49 org.apache.hadoop.mapred.FileInputFormat listStatus

��Ϣ: Total input paths to process : 4

2013-9-30 19:50:50 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

��Ϣ: Running job: job_local_0001

2013-9-30 19:50:50 org.apache.hadoop.mapred.Task initialize

��Ϣ: Using ResourceCalculatorPlugin : null

2013-9-30 19:50:50 org.apache.hadoop.mapred.MapTask runOldMapper

��Ϣ: numReduceTasks: 1

2013-9-30 19:50:50 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: io.sort.mb = 100

2013-9-30 19:50:50 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: data buffer = 79691776/99614720

2013-9-30 19:50:50 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: record buffer = 262144/327680

2013-9-30 19:50:50 org.apache.hadoop.mapred.MapTask$MapOutputBuffer flush

��Ϣ: Starting flush of map output

2013-9-30 19:50:50 org.apache.hadoop.mapred.MapTask$MapOutputBuffer sortAndSpill

��Ϣ: Finished spill 0

2013-9-30 19:50:50 org.apache.hadoop.mapred.Task done

��Ϣ: Task:attempt_local_0001_m_000000_0 is done. And is in the process of commiting

2013-9-30 19:50:51 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

��Ϣ: map 0% reduce 0%

2013-9-30 19:50:53 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ: hdfs://192.168.1.210:9000/user/hdfs/o_t_account/part-m-00003:0+119

2013-9-30 19:50:53 org.apache.hadoop.mapred.Task sendDone

��Ϣ: Task 'attempt_local_0001_m_000000_0' done.

2013-9-30 19:50:53 org.apache.hadoop.mapred.Task initialize

��Ϣ: Using ResourceCalculatorPlugin : null

2013-9-30 19:50:53 org.apache.hadoop.mapred.MapTask runOldMapper

��Ϣ: numReduceTasks: 1

2013-9-30 19:50:53 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: io.sort.mb = 100

2013-9-30 19:50:53 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: data buffer = 79691776/99614720

2013-9-30 19:50:53 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: record buffer = 262144/327680

2013-9-30 19:50:53 org.apache.hadoop.mapred.MapTask$MapOutputBuffer flush

��Ϣ: Starting flush of map output

2013-9-30 19:50:53 org.apache.hadoop.mapred.MapTask$MapOutputBuffer sortAndSpill

��Ϣ: Finished spill 0

2013-9-30 19:50:53 org.apache.hadoop.mapred.Task done

��Ϣ: Task:attempt_local_0001_m_000001_0 is done. And is in the process of commiting

2013-9-30 19:50:54 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

��Ϣ: map 100% reduce 0%

2013-9-30 19:50:56 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ: hdfs://192.168.1.210:9000/user/hdfs/o_t_account/part-m-00000:0+113

2013-9-30 19:50:56 org.apache.hadoop.mapred.Task sendDone

��Ϣ: Task 'attempt_local_0001_m_000001_0' done.

2013-9-30 19:50:56 org.apache.hadoop.mapred.Task initialize

��Ϣ: Using ResourceCalculatorPlugin : null

2013-9-30 19:50:56 org.apache.hadoop.mapred.MapTask runOldMapper

��Ϣ: numReduceTasks: 1

2013-9-30 19:50:56 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: io.sort.mb = 100

2013-9-30 19:50:56 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: data buffer = 79691776/99614720

2013-9-30 19:50:56 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: record buffer = 262144/327680

2013-9-30 19:50:56 org.apache.hadoop.mapred.MapTask$MapOutputBuffer flush

��Ϣ: Starting flush of map output

2013-9-30 19:50:56 org.apache.hadoop.mapred.MapTask$MapOutputBuffer sortAndSpill

��Ϣ: Finished spill 0

2013-9-30 19:50:56 org.apache.hadoop.mapred.Task done

��Ϣ: Task:attempt_local_0001_m_000002_0 is done. And is in the process of commiting

2013-9-30 19:50:59 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ: hdfs://192.168.1.210:9000/user/hdfs/o_t_account/part-m-00001:0+110

2013-9-30 19:50:59 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ: hdfs://192.168.1.210:9000/user/hdfs/o_t_account/part-m-00001:0+110

2013-9-30 19:50:59 org.apache.hadoop.mapred.Task sendDone

��Ϣ: Task 'attempt_local_0001_m_000002_0' done.

2013-9-30 19:50:59 org.apache.hadoop.mapred.Task initialize

��Ϣ: Using ResourceCalculatorPlugin : null

2013-9-30 19:50:59 org.apache.hadoop.mapred.MapTask runOldMapper

��Ϣ: numReduceTasks: 1

2013-9-30 19:50:59 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: io.sort.mb = 100

2013-9-30 19:50:59 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: data buffer = 79691776/99614720

2013-9-30 19:50:59 org.apache.hadoop.mapred.MapTask$MapOutputBuffer

��Ϣ: record buffer = 262144/327680

2013-9-30 19:50:59 org.apache.hadoop.mapred.MapTask$MapOutputBuffer flush

��Ϣ: Starting flush of map output

2013-9-30 19:50:59 org.apache.hadoop.mapred.MapTask$MapOutputBuffer sortAndSpill

��Ϣ: Finished spill 0

2013-9-30 19:50:59 org.apache.hadoop.mapred.Task done

��Ϣ: Task:attempt_local_0001_m_000003_0 is done. And is in the process of commiting

2013-9-30 19:51:02 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ: hdfs://192.168.1.210:9000/user/hdfs/o_t_account/part-m-00002:0+79

2013-9-30 19:51:02 org.apache.hadoop.mapred.Task sendDone

��Ϣ: Task 'attempt_local_0001_m_000003_0' done.

2013-9-30 19:51:02 org.apache.hadoop.mapred.Task initialize

��Ϣ: Using ResourceCalculatorPlugin : null

2013-9-30 19:51:02 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ:

2013-9-30 19:51:02 org.apache.hadoop.mapred.Merger$MergeQueue merge

��Ϣ: Merging 4 sorted segments

2013-9-30 19:51:02 org.apache.hadoop.mapred.Merger$MergeQueue merge

��Ϣ: Down to the last merge-pass, with 4 segments left of total size: 442 bytes

2013-9-30 19:51:02 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ:

2013-9-30 19:51:02 org.apache.hadoop.mapred.Task done

��Ϣ: Task:attempt_local_0001_r_000000_0 is done. And is in the process of commiting

2013-9-30 19:51:02 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ:

2013-9-30 19:51:02 org.apache.hadoop.mapred.Task commit

��Ϣ: Task attempt_local_0001_r_000000_0 is allowed to commit now

2013-9-30 19:51:02 org.apache.hadoop.mapred.FileOutputCommitter commitTask

��Ϣ: Saved output of task 'attempt_local_0001_r_000000_0' to hdfs://192.168.1.210:9000/user/hdfs/o_t_account/result

2013-9-30 19:51:05 org.apache.hadoop.mapred.LocalJobRunner$Job statusUpdate

��Ϣ: reduce > reduce

2013-9-30 19:51:05 org.apache.hadoop.mapred.Task sendDone

��Ϣ: Task 'attempt_local_0001_r_000000_0' done.

2013-9-30 19:51:06 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

��Ϣ: map 100% reduce 100%

2013-9-30 19:51:06 org.apache.hadoop.mapred.JobClient monitorAndPrintJob

��Ϣ: Job complete: job_local_0001

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Counters: 20

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: File Input Format Counters

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Bytes Read=421

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: File Output Format Counters

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Bytes Written=348

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: FileSystemCounters

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: FILE_BYTES_READ=7377

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: HDFS_BYTES_READ=1535

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: FILE_BYTES_WRITTEN=209510

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: HDFS_BYTES_WRITTEN=348

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Map-Reduce Framework

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Map output materialized bytes=458

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Map input records=11

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Reduce shuffle bytes=0

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Spilled Records=30

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Map output bytes=509

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Total committed heap usage (bytes)=1838546944

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Map input bytes=421

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: SPLIT_RAW_BYTES=452

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Combine input records=22

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Reduce input records=15

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Reduce input groups=13

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Combine output records=15

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Reduce output records=13

2013-9-30 19:51:06 org.apache.hadoop.mapred.Counters log

��Ϣ: Map output records=22

|

�ɹ�������wordcount����ͨ���������Dz鿴������

~ hadoop fs -ls hdfs://192.168.1.210:9000/user/hdfs/o_t_account/result

Found 2 items

-rw-r--r-- 3 Administrator supergroup 0 2013-09-30 19:51 /user/hdfs/o_t_account/result/_SUCCESS

-rw-r--r-- 3 Administrator supergroup 348 2013-09-30 19:51 /user/hdfs/o_t_account/result/part-00000

~ hadoop fs -cat hdfs://192.168.1.210:9000/user/hdfs/o_t_account/result/part-00000

1,abc@163.com,2013-04-22 1

10,ade121@sohu.com,2013-04-23 1

11,addde@sohu.com,2013-04-23 1

17:21:24.0 5

2,dedac@163.com,2013-04-22 1

20:21:39.0 6

3,qq8fed@163.com,2013-04-22 1

4,qw1@163.com,2013-04-22 1

5,af3d@163.com,2013-04-22 1

6,ab34@163.com,2013-04-22 1

7,q8d1@gmail.com,2013-04-23 1

8,conan@gmail.com,2013-04-23 1

9,adeg@sohu.com,2013-04-23 1

|

���������Ǿ�ʵ������win7�еĿ�����ͨ��Maven����Hadoop������������Eclipse�п���MapReduce�ij���Ȼ������JavaAPP��HadoopӦ�û��Զ������ǵ�MR������jar�������ϴ���Զ�̵�hadoop���������У�������־��Eclipse����̨�����

6. ģ����Ŀ�ϴ�github

https://github.com/bsspirit/maven_hadoop_template

��ҿ������������Ŀ����Ϊ��������㡣

~ git clone https://github.com/bsspirit/maven_hadoop_template.git |

������ɵ�һ��������ͽ���ʽ����MapReduce����ʵ����

|